Rutgers graduate students presented their findings on “Effectiveness of AI-assisted report assessments: A case study of the United Nations Development Program,” at the American Association for the Advancement of Science (AAAS) Annual Meeting’s poster competition in Phoenix, AZ in mid-February.

The project is being undertaken by Rutgers graduate students Raquel Padilla (PhD Planning and Public Policy), Sandra Jae-Ah Chung (MPP/MCRP candidate), Hemali Angne (PhD Computer Science), and Bloustein School Professor Hal Salzman, all part of the Rutgers SOCRATES (Socially Cognizant Robotics for a Technologically-Enhanced Society) research group. The project is in collaboration with the United Nations Development Programme (UNDP) through their Independent Evaluation Office (IEO).

Hemali Angne is presenting at the AAAS poster competition before the judging panel.

The study assesses the effectiveness of an AI tool based on a large language model (LLM), conducting quality assessments of UN project evaluation reports. Initial findings indicate that the language used in AI-generated assessments is more constrained than that of human reviewers. Furthermore, AI outputs lack nuance and context-awareness.

These limitations affect the usefulness of quality assessments in improving the reports and providing feedback to the development projects. This also raises the question of whether incorporating AI will effectively reduce operating costs or instead add new technology expenses that, while they provide improvements, still require a “human-in-the-loop.” The researchers suggest these findings have broader implications for the use of AI in knowledge work.

The UNDP funds a diverse set of projects, ranging from conserving biodiversity to increasing participation in local governance, in different countries. Country offices prepare evaluation reports on these projects. The quality assessment of these reports is functional in improving the projects and giving feedback to country offices. The IEO currently hires external reviewers for this task, with a cost of around $180,000 per year. To reduce operational costs, the IEO is looking to replace reviewers with an AI tool, specifically a general-purpose LLM guided by prompts.

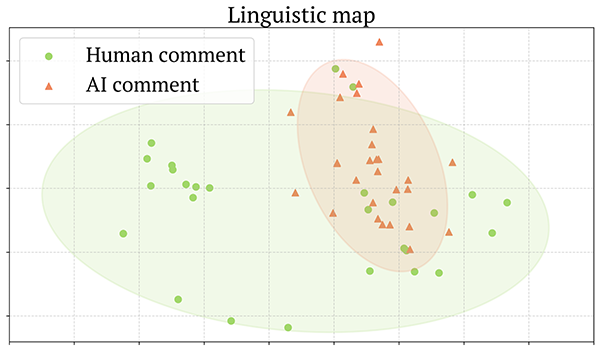

The team at Rutgers conducted qualitative and linguistic analyses of AI outputs, finding shortcomings in the content. The main finding was that the AI struggled with implicit and culturally-specific content, which is essential for an international organization such as the UNDP. AI-generated comments were monotonous and repetitive, not reflecting the diversity of the reports. This was confirmed in a linguistic analysis, which uses a computer model to arrange comments of different reports into a cohesive “linguistic map.”

Comments that share similarities get positioned closer together, while dissimilar comments are located farther apart. The analysis showed that the pool of AI comments was much closer together than the human pool.

A linguistic map of AI and human comments on a set of reports.

These gaps suggest that general purpose AI systems have limitations in tasks like report assessment. Rather than reducing costs, such tools may shift work back onto humans, increasing time and effort to interpret and correct AI outputs.